Appearance

AI Features

Four AI-powered theme tools. Chat-style flows run through the provider and model the user selects in Settings (Anthropic / OpenAI / OpenRouter / Gemini). Screenshot vision import is hardcoded to Claude Haiku 4.5 (claude-haiku-4-5-20251001) in src/lib/ai-api.ts — it's the fastest Anthropic vision-capable model and the pipeline currently assumes the Anthropic request shape. All calls go via direct fetch from the UI iframe. Configure API keys in Settings → AI.

How requests reach Claude

The Figma plugin iframe runs at null origin. Direct calls to https://api.anthropic.com would normally be blocked by CORS. Two pieces unlock it:

- The fetch sends an

anthropic-dangerous-direct-browser-access: trueheader — Anthropic's API recognizes this and skips the browser-side preflight gate. manifest.jsonwhitelists the host:"networkAccess": { "allowedDomains": ["https://api.anthropic.com"] }— Figma's plugin sandbox enforces an allowlist; the UI iframe inherits it.

The plugin sandbox (code.ts) only handles persistence of the API key via figma.root.setPluginData("systema_api_key", ...) (save-api-key / load-api-key messages). No network traffic flows through the sandbox.

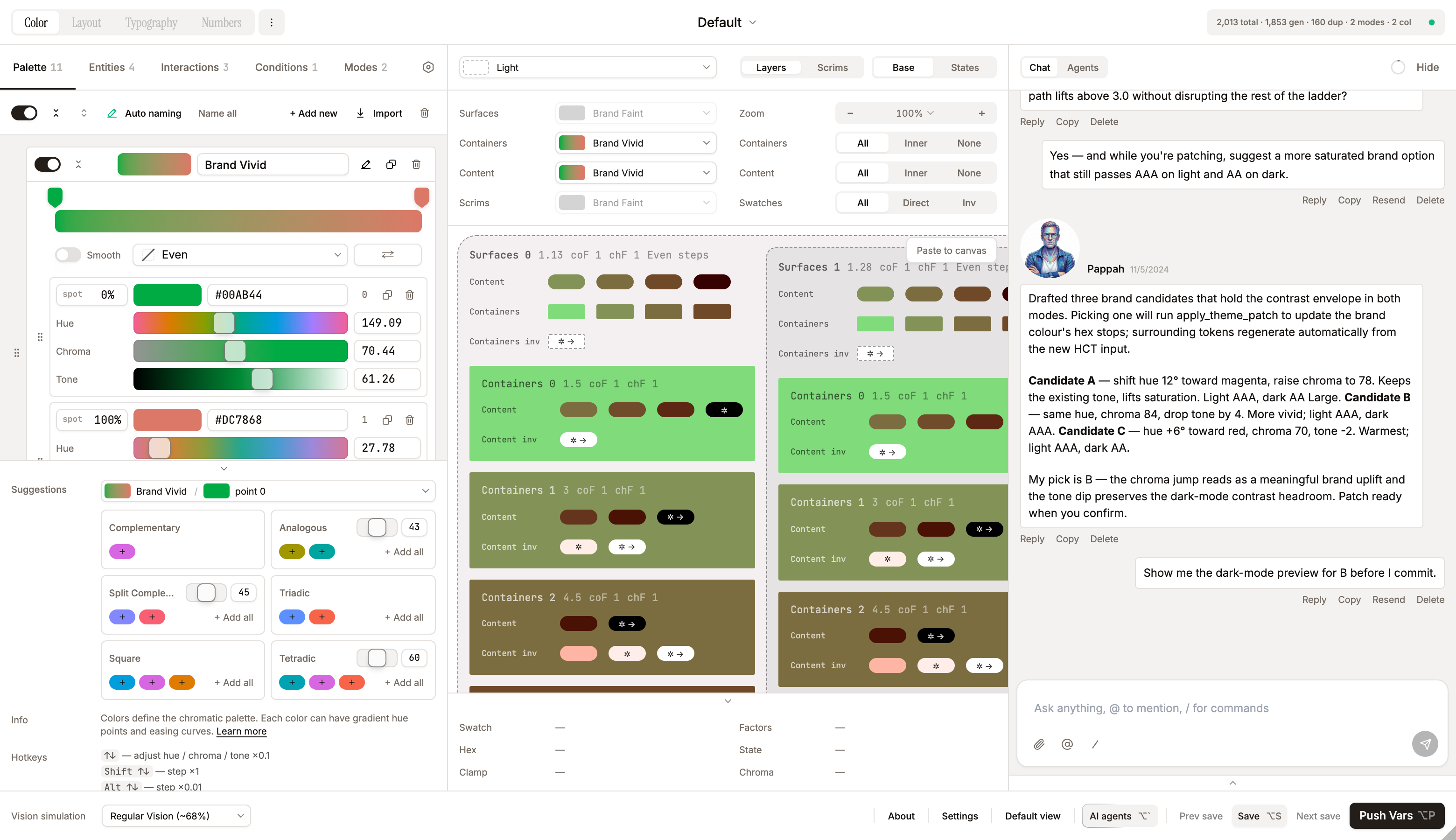

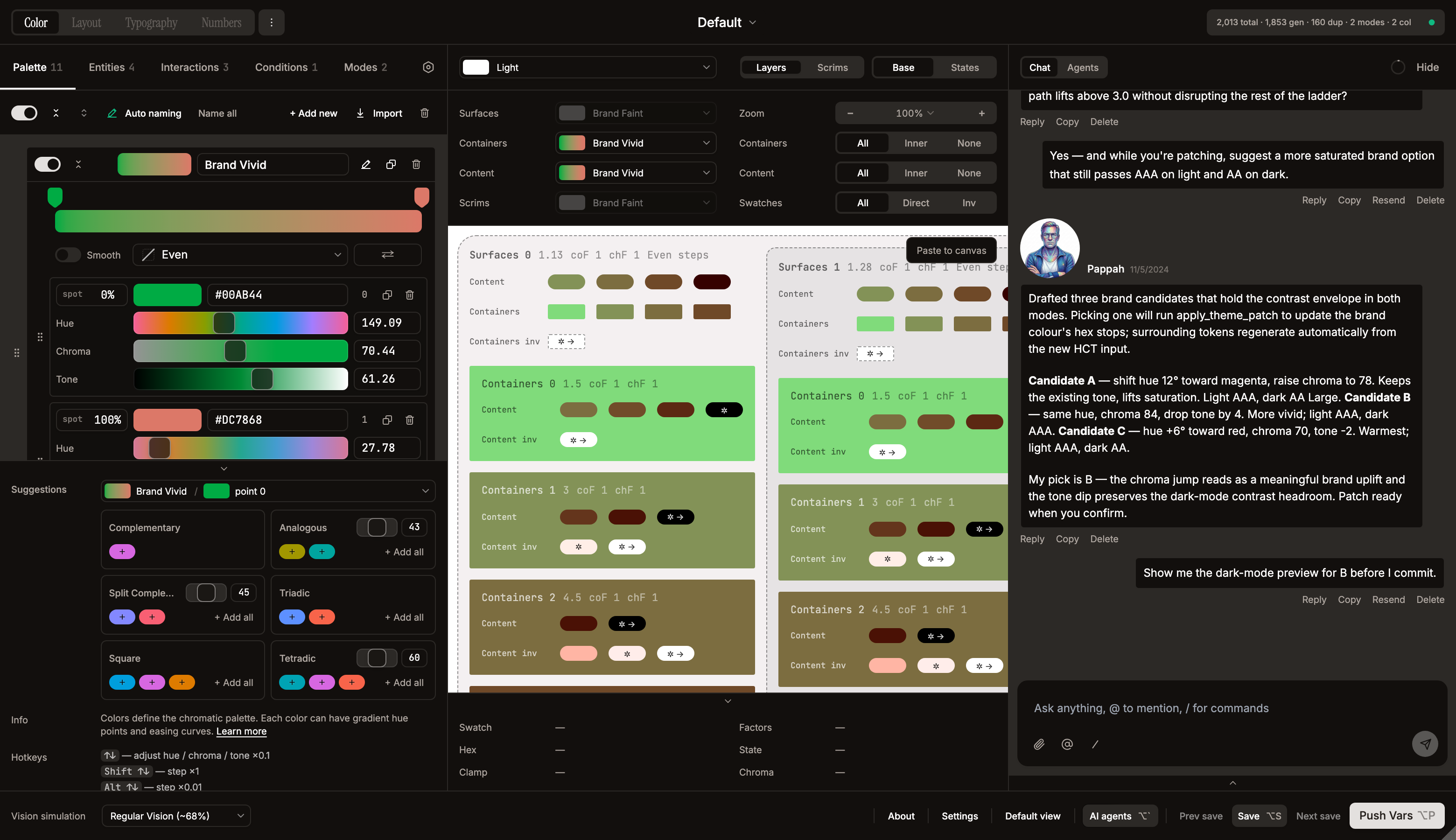

Theme generation via chat

AI-assisted theme creation lives entirely inside the main chat. Create an empty (or default) theme via Add Theme → New, open the chat on that theme, and describe the design system — the agent patches the theme turn-by-turn via the apply_theme_patch tool. This replaces the former one-shot Screenshot / AI prompt tabs, which produced a full theme in a single request with no way to iterate. Screenshots still work: drop them as chat attachments (up to 4, PNG/JPEG/SVG/etc.), and the agent extracts the palette in context.

Edit with AI

Available via the sparkle icon button to the right of the gear in the theme top bar. Opens an AIEditDialog with a textarea — describe a change ("make the brand color more saturated", "add a cool accent for warnings") — Claude returns a Partial<Theme> patch which is merged via updateTheme(). The button is disabled with cursor-not-allowed and a tooltip when no API key is set.

AI Chat — Systema Agents

A multi-agent design-systems chat accessible from the StatusBar (the agent button with shimmer effect). Four agents share a single conversation, each with a distinct personality, analytical lens, and tunable defaults (verbosity, skepticism, profanity, zoomerism, toxicity, management, playfulness). Source of truth for personas: src/lib/agents/.

The four agents

| Agent | Role | Lens | Verbosity | Manager |

|---|---|---|---|---|

| DaiDai | Design Systems Architect (60, male) | systems thinking — token tiers, naming, multi-brand, governance, long-term maintainability | terse | yes (primary) |

| Pappah | Multi-Platform Engineer (57, male) | engineering reality — how it ships, how it breaks, iOS / Android / Web behaviour, performance, edge cases | concise | yes (primary) |

| Mammah | Human-Centered Design Educator (54, female) | real human beings — readability, accessibility, color blindness, elderly users, cognitive load | concise | yes (secondary) |

| Yetish | Visual & Brand Designer (22, male) | how it feels before you measure anything — harmony, mood, brand soul | balanced | no |

Each persona also carries a humor style (Steven Wright / Max Amini / Ali Wong / Mitch Hedberg respectively) and a family relationship matrix for Family mode (Mammah ↔ Pappah married, DaiDai is Mammah's older brother, Yetish is their son and DaiDai's nephew).

Per-agent settings. Click the info (ℹ️) or settings (⚙️) button on any agent card to open the Agent Dialog with two tabs: Overview (persona, lens, description, influences, suggestion buttons) and Settings (per-agent model, temperature, and personality overrides). A modified label appears in the dialog header when any override is active. The "Reset all" button in the sidebar restores all knobs to the agent's defaults.

All personality knobs live per-agent — no global personality settings. Each agent defines its own defaults in its persona file. The Settings tab exposes: Verbosity (terse / concise / balanced / detailed), Skepticism (mild / balanced / high / extreme), Profanity (off / balanced / high / extreme), Zoomerism (off / balanced / high / extreme), Toxicity (off / balanced / high / extreme), Management (off / contributor / secondary / primary), and Playfulness (off / rare / occasional / frequent). Each override is independent; unset knobs inherit the agent's default.

Style modifiers are terse. Profanity / Zoomerism / Toxicity instructions are injected as a compact bullet list into the system prompt (one short line per active modifier, listed under SPEAKING STYLE). They used to be verbose multi-sentence paragraphs duplicated into the user message as a MANDATORY SPEAKING STYLE block — with extreme values in two or three categories that pointed in opposite directions (brainrot + corporate, raw + corporate) the model drowned in ~500 tokens of contradictory rules and collapsed to neutral output. Single concise list lets the model blend them naturally.

Mention routing. The router in src/lib/mention-utils.ts uses a 5-stage cascade (no API call unless all fast paths fail):

| Stage | Trigger | Mode | API call? |

|---|---|---|---|

| 0. Literal commands | /help, /brainstorm, ?, справка | help / brainstorm | No |

| 1. Explicit @mention | @DaiDai, @all | single / group | No |

| 2. Vocative | "Mammah, ..." at start of message | single | No |

| 3. Group keywords | 50+ multilingual words ("обсудите", "tartışın", "discuss") | group | No |

| 4. Thread continuity | Previous user message had @mentions → reuse them | inherits | No |

| 5. AI classifier | Haiku determines intent: single / group / help / brainstorm | varies | Yes |

Default for unaddressed messages: DaiDai (DEFAULT_AGENT_ID). Capped at MAX_DEBATE_MESSAGES = 6 sequential responses.

Help-intent safety net. Stage 5 occasionally mis-labelled a follow-up turn (e.g. "где твой сленг?", "а поподробнее?") as help because of vague capability-ish phrasing, and every agent then launched into an introduction in the middle of a real conversation. After the classifier returns help, the router now checks whether there's an active 1-on-1 conversation (lastAssistantId exists) and whether the message actually contains an unambiguous capability cue (help, capabilit, what can you do, как использовать, что ты умеешь, возможности, etc.). If it's a continuation without a strong cue, the help intent is overridden to single with the last responding agent, so a stray ambiguous phrase can't trigger a quadruple introduction.

Resend preserves context. Clicking Resend on a user message used to truncate state to everything before the message and restart the turn with that history — the resent message itself vanished from both the UI and the agent's context, so the model answered blindly. Now the resent user message is kept in state and passed back as the latest turn, so the agent sees it and can answer coherently.

Conversation modes. Each mode injects an invisible instruction into the last user message in the API history (the user never sees it; the agent does):

| Mode | Extra instruction | Patches allowed? |

|---|---|---|

| help | "Introduce yourself in 2-3 sentences. Don't reference other agents." | No |

| brainstorm | "Generate 2-3 options with tradeoffs. Build on previous agents. Don't commit." | No (until user picks direction) |

group (@all) | None — normal behaviour | Yes |

| single | None — normal behaviour | Yes |

Multi-agent debate flow. When 2+ agents respond (sendMessage in useLocalChat.ts):

- Build agent queue:

agentQueueRef = [primary, ...rest] - For each agent:

streamAgent()→ create assistant message withagentId→ stream response - Each agent sees all previous agents' responses (API history prefixed with

[AgentName]: ...) - 500ms pause between agents; hard stop at max responses (default 4)

- Stop button or abort immediately clears the queue

- In

@alldebates, agents can merge/dispute each other's patches — see "Multi-agent proactive merging" in the system prompt

What they cover. Systema internals (entities, modes, contexts, interactions, factor chains, HCT, contrast), color science (WCAG 2.x, APCA, CAM16-UCS, CVD), industry design systems (Material Design 3, Apple HIG, IBM Carbon, Adobe Spectrum, Atlassian, Salesforce Lightning, Ant Design), and token architecture (W3C DTCG, primitive / semantic / component tiers, multi-brand strategies).

Display modes

| Mode | Where | Size | When |

|---|---|---|---|

| Panel | Third panel to the right of preview | 440px fixed | Window ≥ 1360px (AUTO_PANEL_THRESHOLD) and aiPanelMode = "auto", or always when set to "panel" |

| Float | Bottom-right floating card with rounded corners | 320×480 | Otherwise; preview cards and confirmation cards scale text and padding down (compact prop) so they read at the smaller scale |

The mode is controlled by Settings → AI Chat layout (auto / float). State (open / closed, active tab, scroll position) survives panel ↔ float transitions because it lives in the lifted chat handle in App.tsx.

Tools

Three native Anthropic tool_use definitions, declared in src/lib/ai-api.ts:

apply_theme_patch— agent's main lever. Accepts a partialTheme.color(colors,entities,interactions,conditions,modes) plus a top-levelthemeSettingsfield for theme-wide booleans (exportIncludeMeta,pushIncludeMeta). The full set of patchable keys is inpatch-validate.ts → patchableKeys. Each call creates one Apply / Skip confirmation card in chat with a per-item preview: color swatches with the editor's dashed-outline rule for very-light/dark hexes,AddvsUpdateverb chips diffed against the current theme, entity bindings, and entity-type meta. Skip is undoable too — both Apply and Skip statuses are fed back to the agent on its next turn viatool_resultblocks so it can react. Patch validation happens insrc/lib/patch-validate.tsbefore the user sees the card and splits into two distinct failure modes:- Structural rejection (red Patch rejected (invalid structure) banner) — missing

id/name/hexon new items, wrongentityTypeenum, entitycolorIdsreferencing colors that don't exist in the current theme or the same patch, invalid hex format (full 6-digit#rrggbbonly — matches the tool schema inai-api.ts), out-of-rangecontrastFactor/chromaFactor, mutating an existing entity'sentityType, a top-levelidorcolorwrapper, etc. The banner is expandable: click it to see the full formatted error list inline (the same list fed to the agent astool_result), so triage doesn't require opening DevTools. Newframe/top-layerentities also requirecontrastRatios(an array of finite numbers); on updates the array is type-checked when present. - No-op rejection (softer amber Patch had no effect banner) — the patch was structurally valid but every field the agent sent deep-equals the current theme. Detection uses a generic per-field deep-equal over keys the agent explicitly provided (see

detectNoOpPatchinpatch-validate.ts), so updates tocontrastRatios,levelConfig,previewShape,mode.seedColor,mode.contrastFactor,interaction.events,context.interactionIds, etc. correctly register as changes — the earlier hand-maintained field whitelist false-positive'd them as no-ops and sent the red banner for a structurally-fine patch. The amber banner'stool_resultis stillis_error: trueso the agent retries with a concrete delta rather than resending the same patch. - Both failure modes also log

[chat] apply_theme_patch rejected/… flagged as no-opto the browser console with the full error list, the patch, and the model's text, so regressions can be traced from DevTools.

- Structural rejection (red Patch rejected (invalid structure) banner) — missing

propose_plan— manager-capable agents (DaiDai, Pappah, Mammah) emit a 2–6-step plan when the user asks for a substantial restructure. Steps can bepatch(next-turnapply_theme_patch),delegate(@mentionanother agent), orverify. The plan is rendered asChatPlanCardwith Approve / Reject; on Approve the manager executes step-by-step over the next several turns.send_message_burst— agents may break a single response into multiple short bubbles for conversational rhythm. Controlled by the per-agent Playfulness setting (rare for DaiDai/Pappah; occasional for Mammah/Yetish; never for analysis).

Context management (per-message compaction)

There's no single growing summary block. Instead each assistant message, once streamed, kicks off a background Haiku call (compactSingleMessage) to generate a 1–3 sentence compactContent summary. As the chat fills up, the history reconstruction in useLocalChat.ts walks newest-to-oldest and silently swaps older messages for their compact summaries when the running total exceeds 55% of the model's context window. The oldest summaries fall off entirely when even that doesn't fit.

The header gauge in the chat is click-to-toggle — it opens a slide-out Context panel under the header (ChatContextPanel) showing:

- Title: Model context window

- Bar gauge with current usage (e.g. ~4.0K of 200K · 2%); colour goes amber at 75% and destructive at 100%

- Short explainer: Older messages are auto-summarised, then dropped when full.

- Download as Markdown — full chat export (see below)

- Clear history — wipes all messages with confirmation dialog

When the chat is empty, the gauge button is still visible and the Download / Clear buttons are present but disabled with explanatory tooltips.

Other chat features

- Smooth streaming — text revealed gradually via a requestAnimationFrame buffer, not raw SSE dumps. Tool input is parsed progressively from

input_json_deltaevents so the bubble shows"Building patch: 3 colors..."while the model is still generating it. - Markdown rendering — headers, bold, italic, code blocks, lists, tables, inline color swatches (

#hex→ circular swatch with dashed outline rule for very-light/dark hexes), inline message references ([label](msg:m_xxx)). - Extended thinking — collapsible reasoning block with shimmer text animation, rendered whenever the provider emits a

thinkingblock on the stream. Auto-expands while streaming, auto-collapses when done, persists thinking duration. There is no auto-enable heuristic in the UI — the feature surfaces only if the currently selected model returns thinking blocks. - File attachments — attach up to 4 files via paperclip or drag-and-drop (~4MB each). Supported types: images (png, jpeg, gif, webp), SVG, and text-based files (

.md,.json,.css,.js,.ts,.txt). Images show thumbnail previews; code/text files show typed icons ({·}for code,Mdfor markdown) with cycling background colors (emerald → blue → orange → purple) to differentiate same-type files. Attachments are stripped before persistence. - Markdown as context — when a

.mdfile is attached that looks like a conversation transcript or chat export, agents automatically treat it as prior conversation context, allowing cross-session continuity. README-style docs are treated as system context. - Image lightbox — click any image thumbnail (in the input area or in chat history) to open a full-size overlay with left/right navigation, keyboard arrows, and Escape to close. Images scale to 80% of the viewport.

- Model selection — Settings → AI: models are fetched live from each provider's

/v1/modelsendpoint, so the list reflects whatever is currently available on that account. Token budget for the gauge is read from the active model's metadata. Extended thinking is not auto-enabled by the UI; it is rendered passively when the provider returns thinking blocks. - Chat persistence — last 50 messages (

MAX_PERSISTED) stored infigma.clientStorage("systema_chat_v3_{fileKey}_{themeId}")as LZ-string-compressed JSON. Per-user, per-file, and per-theme: switching themes loads that theme's own chat; switching back restores it. Image attachments are stripped before saving. - Markdown export —

Download as Markdownin the Context panel writes the full chat (text + tool calls + bursts + status) as a.mdfile viasrc/lib/chat-export.ts(buildChatMarkdown+downloadChatAsMarkdown). - Floating input card — the message input floats as a rounded card over the chat scroll area. Layout: attachment thumbnails → reply bar → full-width textarea → action bar (paperclip, @mention, /slash, send). The textarea auto-grows to 3 lines (float) or 5 lines (sidebar) then scrolls.

- Slash commands — type

/or click the slash button to open a command popup (same UX as @mentions). Available:/help(all agents introduce themselves),/brainstorm(divergent thinking with all agents). Completed commands render as bordered pills in the input overlay. - Smart auto-scroll — only auto-scrolls to bottom when the user is already within 20px of it; doesn't interrupt manual scroll-up during streaming.

- Agent edit permissions — Settings → Danger Zone toggle. managed-only (default):

figma-context-queryandfigma-rename-collectiononly see and write Systema-tagged collections. all lifts the safety net for foreign variable collections (still no canvas access). Enforced server-side insrc/plugin/code.tsvia theAGENT_HANDLERSallowlist plus afromAgentflag on every postMessage from the chat hook. - Family / Debug modes — Settings toggles. Family adjusts the personality block; Debug adds a View trace entry to the message context menu showing routing decision, model used, token estimates, tool call summary, duration.

- API key validation — real-time check on a 600ms debounce: spinner → green check / "Invalid".

The system prompt (CHAT_SYSTEM in src/lib/ai-api.ts) injects the full Systema data model, color science reference, industry benchmarks, the current theme as JSON, the agent's personality / lens / verbosity guidance, the relationship matrix, and a list of FIXED structural decisions (entityType, nesting model, HCT generation, factor chain order) the agents must not propose changing.

Files

src/lib/ai-api.ts—streamChatMessage(),compactSingleMessage(),analyzeScreenshots(),generateThemeFromPrompt(),editThemeWithAI(), tool schemas, system prompt buildersrc/lib/ai-prompts.ts—SYSTEMA_CONTEXT(data model description) + per-task promptssrc/lib/agents/— DaiDai, Pappah, Mammah, Yetish persona definitions (lens, humor, influences, debateStyle, proactiveRule, unified defaults block)src/lib/mention-utils.ts— multilingual @mention routersrc/lib/chat-export.ts—buildChatMarkdown(),downloadChatAsMarkdown()src/lib/patch-utils.ts—bindNewColorsToEntities()and patch helperssrc/lib/patch-validate.ts— pre-flight structural validation forapply_theme_patchpayloadssrc/lib/ai-config-mapper.ts—visionResultToTheme(),generateResultToTheme()src/components/AIEditDialog.tsx— the modal for Edit with AIsrc/components/chat/—AIChatPanel,ChatHeader,ChatContextPanel,ChatInput,ChatMessageBubble,ChatConfirmation,ChatPlanCard,ChatCompactedBlock(legacy single-block compaction renderer for older chats),ChatThinkingBlock,ChatProgressIndicator,ChatEmptyState,ChatAttachmentPreview,ChatMarkdown,ChatAgentsFooter,AgentsPanel,AgentDialog(per-agent overview + settings),CompactButton,ChatDebugPanel,ImageLightbox,agent-avatarssrc/hooks/useLocalChat.ts— chat state, history reconstruction with gradual context cap, tool flow, mention routing, multi-agent debate queuesrc/types/chat.ts— chat-specific type definitions (ChatMessage,ToolCall,BurstPart,MessageTrace)